Monkeys have demonstrated the ability to navigate complex virtual environments using only their thoughts, thanks to advanced brain-computer interface (BCI) technology. This breakthrough, achieved by researchers at KU Leuven in Belgium, holds significant promise for individuals with paralysis, potentially enabling them to explore virtual realities or gain more intuitive control over prosthetic limbs and assistive devices like electric wheelchairs. The study, published in 2026, marks a significant step forward in harnessing the power of the human-machine interface for enhanced mobility and interaction.

Pioneering Implantation and AI Integration

The research team, led by Peter Janssen, implanted three rhesus macaque monkeys with sophisticated BCIs. A key innovation of this study was the strategic placement of not one, but three implants per animal. Each implant contained 96 electrodes, strategically positioned within the primary motor cortex, the dorsal premotor cortex, and the ventral premotor cortex. While the primary motor cortex is a well-established target in BCI research due to its direct involvement in executing physical movements, the inclusion of the premotor cortices represents a significant advancement. These areas are understood to play a crucial role in the higher-level planning and conceptualization of movement, suggesting a more abstract and potentially intuitive pathway for neural signal interpretation.

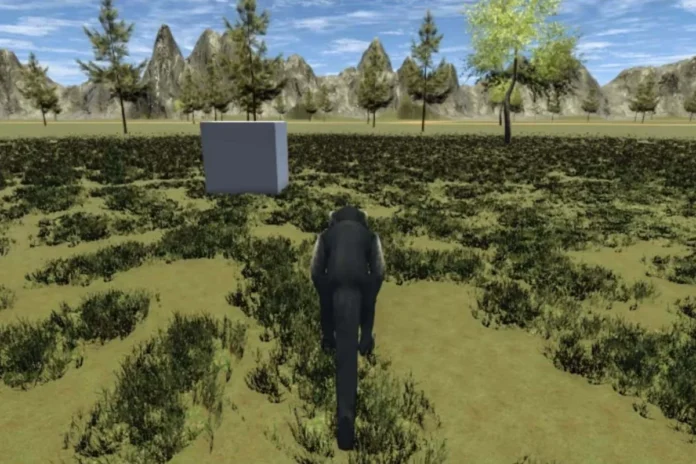

The electrical signals captured by these electrode arrays were then processed by an advanced artificial intelligence (AI) model. This AI was trained to decode the complex neural patterns associated with the monkeys’ intentions and translate them into commands that controlled virtual reality (VR) avatars. The monkeys observed the 3D virtual worlds displayed on a monitor, and their neural activity dictated the movement of their digital counterparts.

Unlocking Intuitive Navigation

The experimental setup allowed the monkeys to engage with virtual environments in increasingly complex ways. Initially, they were tasked with controlling a simple sphere moving across a virtual landscape from a fixed, first-person perspective. This foundational task allowed the researchers to establish a baseline understanding of how the BCI translated neural signals into directional commands.

The experiments then progressed to more dynamic and engaging scenarios. The monkeys were able to control animated monkey avatars from a third-person perspective, mirroring the control schemes common in video games. This demonstrated the BCI’s ability to adapt to different viewpoints and control paradigms. Further trials saw the monkeys navigating through virtual buildings, a significant achievement that required them to interact with the environment by opening doors and moving from one room to another. This level of interaction implies a sophisticated understanding and execution of sequential movements and spatial reasoning within the virtual space.

A More Natural Connection to Movement

The implications of this research are profound, particularly when contrasted with earlier BCI implementations in humans. Many previous human trials have relied on participants thinking about specific, often rudimentary, physical movements—such as imagining raising or lowering a finger—to control a cursor on a screen. While effective to a degree, this method can feel artificial and requires considerable training and effort.

Janssen posits that the placement of electrodes in the monkeys’ premotor cortices taps into a more abstract and intuitive representation of movement. "We cannot ask these monkeys, of course, but we just think that it’s a more intuitive way of controlling a computer, basically," Janssen stated. He elaborated on the current limitations of some BCIs, noting that human users have sometimes described the experience as akin to "trying to move your ears"—a reference to a foreign and often frustrating effort that can take weeks or months to master. The new approach, by accessing higher-level planning areas, aims to bypass this learning curve, offering a more direct and natural cognitive link to controlling external devices.

Bridging the Gap to Human Application

Janssen is optimistic about the potential for this technology to translate to human applications, particularly for individuals with paralysis. He envisions a future where people could intuitively navigate virtual worlds for rehabilitation, education, or entertainment, or exert more seamless control over electric wheelchairs. However, he acknowledges that significant research and development are still required before human trials can commence.

"There’s a bit of work necessary to know exactly where to implant a human because a lot of these areas are not very well known in humans, where they are exactly," Janssen explained. Despite these challenges, he remains confident. "But once we figure that out, it should be possible. It should actually be easier because you can explain to the human what they are supposed to do." This highlights a key advantage in human application: the ability to provide explicit instructions and leverage conscious understanding of desired actions.

Expert Endorsement and Broader Context

The groundbreaking nature of this research has garnered attention from leading BCI experts. Andrew Jackson, a researcher at Newcastle University in the UK, highlighted the remarkable adaptability demonstrated by the monkeys. "One impressive thing about the work is that the monkeys are able to control movement from different viewpoints and in different contexts in the same way," Jackson commented.

He suggests that the BCI’s success in multiple contexts points to its ability to access neural representations of movement that are inherently abstract. This abstract understanding allows the system to be flexible, much like a human gamer who can adapt a familiar controller to different video games. "I’ve got a bunch of different buttons I can press, and in different games I have to work out the specific mapping between those different buttons and and the particular game," Jackson explained. "But it’s a pretty easy thing to do because there’s only so many combinations I need to try. If the new game actually involved me putting down the controller, going over and opening my fridge or something, then it would be much harder." This analogy underscores the sophisticated nature of the neural decoding achieved in Janssen’s study.

A Timeline of BCI Advancements

This research builds upon a growing body of work in BCI technology, with several promising human trials already conducted:

- 2024: A man with paralysis successfully piloted a virtual drone through a challenging obstacle course using a BCI that interpreted his thoughts of finger movements. This was achieved through an AI model that translated neural signals into drone commands.

- Prior to 2026: Another significant human trial demonstrated the ability of a BCI to convert imagined handwriting into text, allowing individuals to communicate through thought alone.

- 2024: Neuralink, a company co-founded by Elon Musk, announced its first human implantation of a BCI, enabling a participant to control a computer cursor with their thoughts. However, this development was later tempered by reports that approximately 85% of the electrode threads shifted within a month, significantly impacting control capabilities.

These milestones, while varied in their success and approach, collectively illustrate the rapid progress and ongoing innovation within the BCI field. The KU Leuven study’s focus on higher-level motor planning areas represents a crucial evolutionary step, aiming for more intuitive and versatile control systems.

The Future Landscape of Neurotechnology

The implications of Janssen’s research extend beyond immediate assistive applications. The ability to decode complex, abstract neural signals could revolutionize fields ranging from advanced prosthetics and exoskeletons to immersive virtual reality experiences and even novel forms of human-computer interaction. The development of BCIs that can interpret intentions rather than just simple motor commands opens the door to technologies that feel less like tools and more like extensions of the user’s own will.

While the path to widespread human application of this specific BCI technology may involve further refinement and extensive clinical validation, the success in rhesus macaques provides a compelling proof of concept. It offers a tangible glimpse into a future where technological barriers imposed by physical limitations can be overcome through the remarkable power of the mind. The ongoing quest to perfect these interfaces continues to push the boundaries of neuroscience and engineering, promising a future where thought truly becomes action.